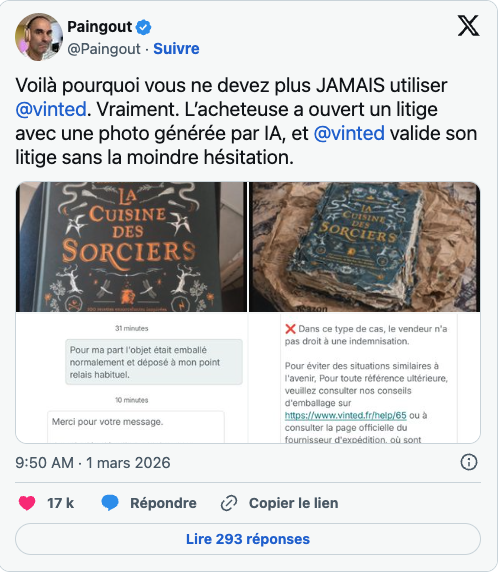

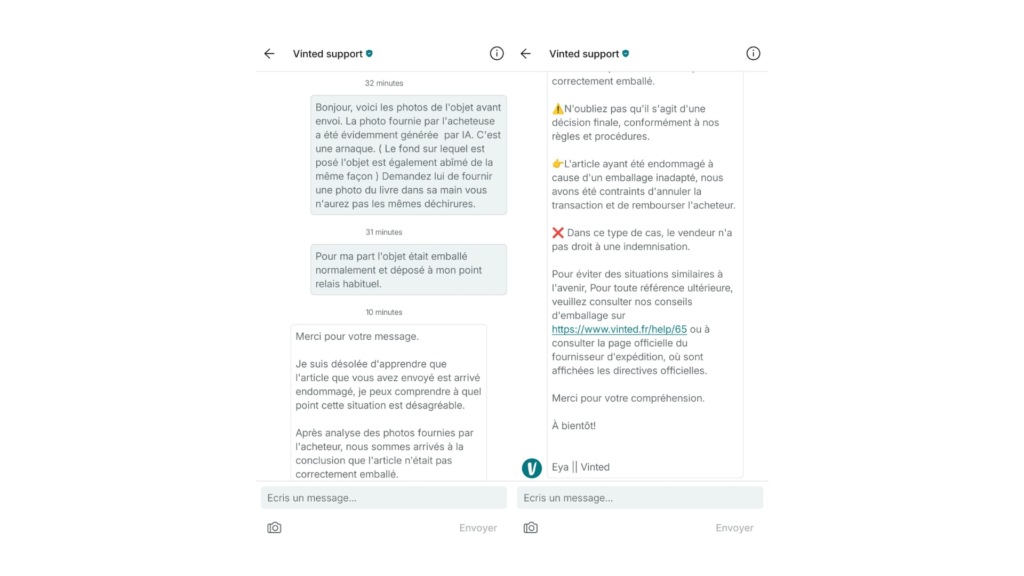

AI generated refund fraud is no longer theoretical. On March 1, 2026, it worked. A book was sold in perfect condition on Vinted. The buyer received it, generated a fake photo of it destroyed, submitted the image as dispute evidence — and won.

The seller lost his item and his money. Vinted’s support team ruled against him. An AI model had generated the photo. Crucially, he spotted it immediately. They didn’t care.

This is the first publicly documented case of AI-fabricated visual evidence succeeding in an e-commerce dispute. It will not be the last.

What Happened on Vinted – and Why It Matters

A seller on Vinted sold a book in perfect condition. Standard transaction. The buyer received it, then opened a dispute and submitted a photo showing the book completely destroyed, placed on a crumpled Amazon box

The tell was subtle but visible to anyone who knows what to look for: the background showed the same damage pattern as the book itself. A tell-tale artifact of generative image models, where the AI bleeds the damage aesthetic across the entire composition rather than isolating it to the object.

The seller flagged it. Vinted’s automated support system didn’t engage with the forensic argument. Buyer-first policy applied. Case closed.

More importantly, what makes this case a landmark isn’t the amount of money involved. It’s the proof of concept it represents. AI generated refund fraud now has a documented, successful playbook. And it’s public.

The New Fraud Workflow — Start to Finish in Under Five Minutes

This is how AI generated refund fraud works in 2026:

- Order item from any marketplace

- Receive item in perfect condition

- Open a generative AI tool — Gemini Flash, Sora, or any image editor with inpainting

- Prompt: “add realistic damage to this product photo”

- Submit AI-generated damage image as dispute evidence

- Collect refund

- Keep item

Total time: under five minutes. Total cost: zero. Technical knowledge required: none.

In fact, tools like these can generate photorealistic “damaged goods” imagery in seconds, with no expertise needed beyond knowing what prompt to type. The economics are brutal for sellers — no recourse, no appeal, no recovery — and the architecture that was supposed to protect them is precisely what’s being exploited.

Why Dispute Systems Are Structurally Exposed

Photo evidence was a reasonable proxy for truth when producing a convincing fake required real skill — Photoshop expertise, time, risk of detection. Generative AI has collapsed that cost to zero.

But the verification infrastructure on most platforms hasn’t moved. Dispute systems were designed around a world where fabricating evidence was hard. That world no longer exists.

Easily accessible AI tools like ChatGPT, Gemini and Claude are making it easier than ever for bad actors to dupe brands, forcing retailers to reconsider how quickly they issue refunds and how much verification they require upfront.

The problem extends beyond damage photos. Fraudsters are also using AI to generate fake shipping receipts and even fabricate police reports after claiming an item was lost in transit. The entire evidence layer of e-commerce dispute resolution is now compromised.

And the numbers confirm this isn’t isolated. Among major retailers, there was a 330% increase in AI fraud in just two months. Overall AI-driven fraud attacks rose 1,210% last year, with combined losses reaching an estimated $1 billion.

However, the Vinted case is just an individual-level exploit. But the same logic applies at scale.

This Is Not New — It’s the Next Phase

AI-generated visuals on second-hand platforms are not a new phenomenon. Fraudsters had already used AI-generated imagery to flood platforms like Vinted, Leboncoin, and eBay with fake fast-fashion listings — virtual studio shots of Shein-style items that sellers priced at multiples of their real value

At UncovAI, we’ve watched AI-generated content move through e-commerce in distinct phases.

Phase 1 — Catalogue Spam. AI-generated product photos used to flood second-hand platforms with fake listings. Annoying. Costly. Ultimately a catalogue integrity problem.

Phase 2 — Evidence Fabrication. AI deployed directly against individual sellers and the dispute mechanisms designed to protect buyers. Same technology. Fundamentally different threat model.

We are now in Phase 2.

The gap between “this capability exists” and “this capability is being weaponized against you” is now measured in weeks. At its heart, this new wave of refund abuse is driven by a single, powerful principle: the manipulation of digital evidence submitted to merchants.

The Scale of What’s Coming

E-commerce returns are expected to cost brands $379 billion in 2026. An estimated one in every ten retail items returned for a refund is fraudulent.

Those figures were calculated before AI generated refund fraud became a documented, reproducible exploit with a five-minute workflow.

Deepfake detection firm Pindrop estimates that three in ten retail fraud attempts are now AI-generated, and some large chains report more than 1,000 AI bot calls per day.

Nearly three-quarters of U.S. companies now report more AI-powered fraud attempts than last year in the past year, and roughly half of finance leaders say AI-generated fraud is now one of their biggest challenges in fraud prevention.

As a result, every platform that hasn’t updated its evidence verification assumptions is already behind.

What Needs to Change — For Platforms

Therefore, platforms need to treat submitted dispute photos as untrusted inputs — the same way security teams treat user-uploaded files. That means three structural changes:

Verification at submission. AI image forensics applied at the point of dispute filing, before human or automated review begins. Not after. Not as a secondary check. At the moment the image enters the system.

Behavioral context. A single disputed transaction is noise. Patterns across accounts, timing, dispute frequency, and outcomes are signal. AI generated refund fraud at scale leaves behavioral traces that image-level detection alone will miss.

Policy recalibration. Buyer-first defaults made sense when fabricating evidence was hard. Platforms need to revisit those defaults in light of what generation tools now make trivial. Generative AI has inverted the burden of proof. Restoring it requires infrastructure, not just policy intent.

The Legal Reality Platforms Are Ignoring

It’s worth stating clearly: this is fraud. Not a gray area. Not a loophole.

Under French law on deceptive commercial practices, submitting fabricated evidence in a commercial dispute carries up to two years imprisonment and €300,000 in fines — regardless of whether the platform detected it. Perpetrators face real legal risk, even though enforcement is lagging.

Platforms that knowingly fail to implement available detection measures may also find themselves facing questions about their own liability exposure as documented cases accumulate.

How UncovAI Helps Marketplaces and Trust & Safety Teams

UncovAI helps platforms detect AI-generated content before it becomes a liability — at the exact points in the workflow where it enters your system.

AI Image Forensics — Real-time detection of AI-generated and manipulated visuals submitted through dispute, listing, or onboarding flows, before they influence outcomes. Applied at submission, not after damage is done.

Synthetic Content Monitoring — Continuous scanning of marketplace catalogues to identify AI-generated listings, fake product photography, and coordinated spam at scale. Phase 1 and Phase 2 protection in a single pipeline.

Trust & Safety Intelligence — Cross-signal analysis across user behavior, content metadata, and transaction patterns to surface coordinated AI generated refund fraud that individual-level image detection misses.

The Vinted case is a documented proof of concept. The playbook is now public. The tools to execute it are free and require no technical skill. The only variable is whether your platform has detection infrastructure in place before this scales from individual exploit to industrial operation.

Frequently Asked Questions

What is AI generated refund fraud?

AI generated refund fraud is the use of generative AI tools to create fake photographic evidence — typically showing false damage to a product — which is then submitted to an e-commerce platform’s dispute system to obtain a fraudulent refund while keeping the original item.

How common is AI generated refund fraud in 2026?

In 2026, it is accelerating rapidly. Pindrop recorded a 330% increase in AI fraud among major retailers in just two months, and three in ten retail fraud attempts are now estimated to be AI-generated. The Vinted case in March 2026 was the first publicly documented successful use of AI-fabricated evidence in a marketplace dispute.

Can platforms detect AI-generated dispute photos?

Yes — with the right infrastructure. AI image forensics can identify artifacts in generative images that are invisible to human reviewers. The challenge is that most platforms apply photo review after a decision has already been influenced, rather than at the point of submission.

What should sellers do if they suspect AI generated refund fraud?

Document everything. Screenshot the submitted photo immediately. Run it through an AI image detection tool to generate a forensic report. Submit this as counter-evidence with your appeal. If the platform does not engage with forensic evidence, escalate to consumer protection authorities and, where applicable, file a police report for fraud.

Is submitting AI-generated evidence in a dispute illegal?

Yes. In France, this constitutes a deceptive commercial practice carrying up to two years imprisonment and €300,000 in fines. The EU, UK, and US all enforce similar fraud statutes. The technology is new. The law is not

Conclusion

AI generated refund fraud crossed from theoretical to operational on March 1, 2026. Today, the workflow is documented, the tools are free, and the exploit works against the systems most platforms currently have in place.

This is not a problem that will stabilize on its own. Generation technology improves every month. The artifacts that make AI images detectable today will be gone from the next model release. Platforms have an open window right now to build detection infrastructure before this scales— and it will not stay open.

If your trust and safety team is thinking about how to get ahead of it — let’s talk.

Related reading: